A Workflow chatbot is a conversational interface powered by a pipeline where you define every step explicitly. You choose which nodes run, in what order, and how data flows between them. The pipeline runs the same sequence every time a message comes in, giving you full control over the chatbot’s behavior.Documentation Index

Fetch the complete documentation index at: https://docs.vectorshift.ai/llms.txt

Use this file to discover all available pages before exploring further.

What can a Workflow chatbot do?

Start with the outcome you want:- “Answer customer questions about orders, returns, and shipping using our help center articles.” The pipeline queries a knowledge base, feeds the results to an LLM, and returns a sourced answer every time.

- “Summarize uploaded documents and answer follow-up questions about them.” The pipeline uses a Chat File Reader node to process uploads and an LLM to generate summaries.

- “Collect user information step by step, then create a support ticket.” The pipeline uses a Data Collector node to gather required fields before calling an integration.

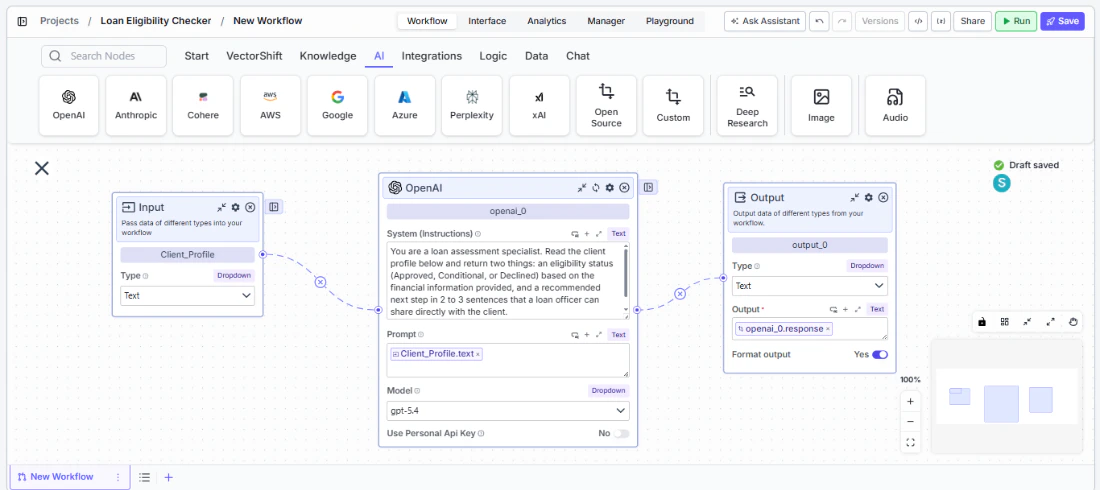

What a typical chatbot pipeline looks like

A Workflow chatbot is driven by a pipeline you build in the pipeline editor. Here is an example of a complete chatbot pipeline: Most chatbot pipelines use the same core nodes:- Input node — receives the user’s chat message and passes it into the pipeline.

- Knowledge Base Reader node — queries your knowledge base for information relevant to the user’s message.

- LLM node — takes the user’s question, retrieved context, and conversation history, then generates a response.

- Chat Memory node — feeds conversation history to the LLM so it can reference earlier messages.

- Output node — returns the LLM’s response to the user in the chat interface.

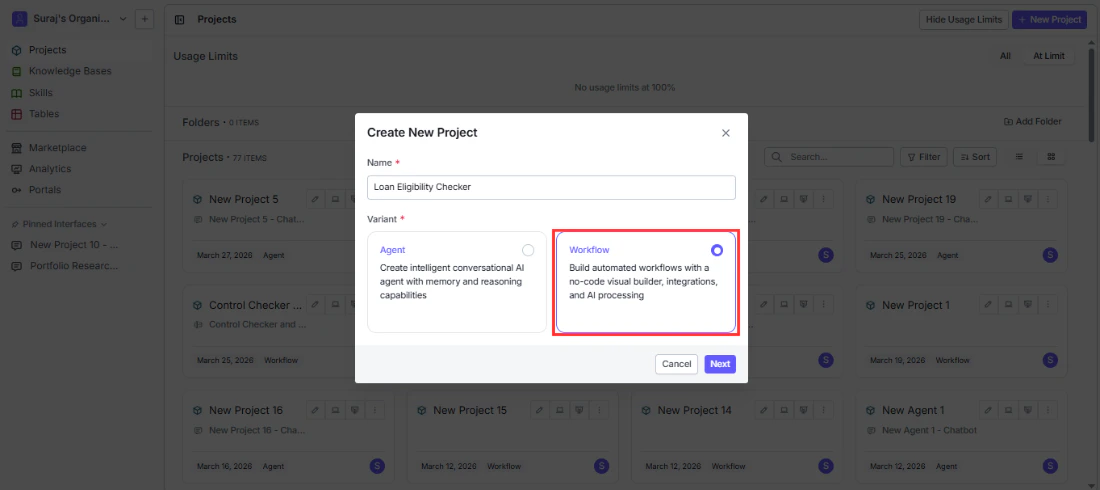

Creating a Workflow chatbot

Follow these five steps to go from a pipeline to a deployed chatbot.Step 1. Build your pipeline

Create a pipeline in the pipeline editor with the nodes your chatbot needs (Input, LLM, Chat Memory, Knowledge Base, Output, etc.). For a full guide, see Pipelines.

Step 2. Add nodes to the pipeline

Configure the nodes that define your chatbot’s behavior. At a minimum, connect an Input node to receive the user’s message, an LLM node to generate a response, and an Output node to return the reply. Add a Chat Memory node for conversation history and a Knowledge Base Reader node for grounded answers.

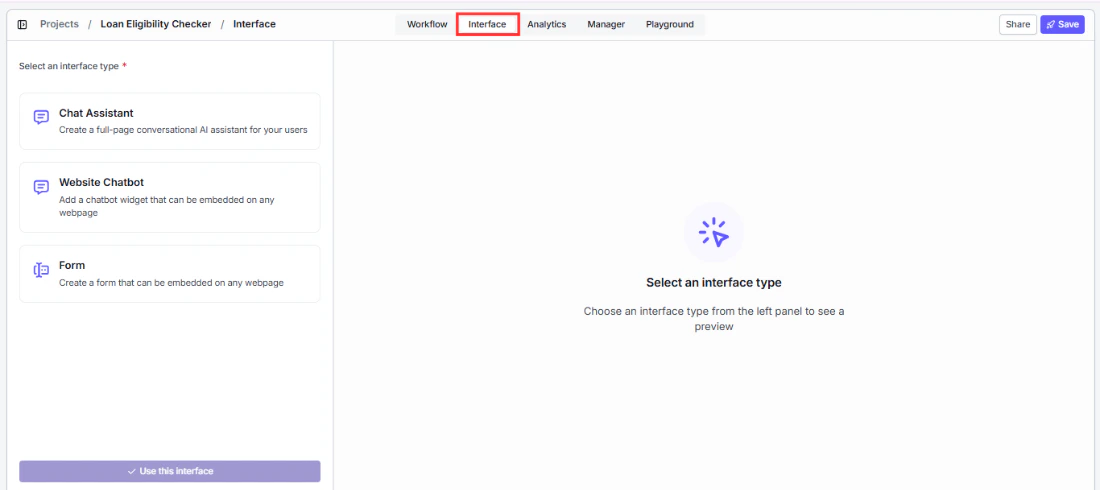

Step 3. Export to the Interface tab

Once your pipeline is ready, navigate to the Interface tab to turn your pipeline into a chatbot and select your pipeline.

Step 4. Personalize the interface

Customize the chatbot’s appearance and behavior — set your brand colors, welcome message, bot name, starter prompts, and avatars. For full details, see Customizing your chatbot.

Step 5. Deploy

Deploy the chatbot to make it available to your users. Choose from a shareable link, an embedded widget, Slack, WhatsApp/SMS, or API. See Sharing and deploying for details on each channel.Voice input

You can also use your voice to send messages. Click the microphone button in the message input area, speak your question, and click send. VectorShift transcribes your audio using Whisper and sends the transcribed text as your message.Example Workflow chatbots you can build

Workflow chatbots are ideal for finance use cases where the response process needs to be consistent, auditable, and grounded in specific data sources every time.- Client portfolio Q&A bot — A client opens the chatbot and asks “What is my current allocation across equities and bonds?” The pipeline queries a knowledge base loaded with the client’s portfolio data, passes the retrieved context to an LLM with a compliance-aware prompt, and returns a sourced answer. Every response follows the same retrieval-then-generate sequence, so the output is predictable and easy to audit.

- Earnings report summarizer — An analyst uploads a quarterly earnings PDF and asks follow-up questions. The pipeline uses a Chat File Reader node to extract the document content, feeds it to an LLM with a summarization prompt, and keeps the full document in context for follow-up questions like “What drove the revenue increase in Q3?” The pipeline never deviates from this read-then-answer flow.

- Regulatory compliance intake bot — A user reports a potential compliance issue through the chatbot. The pipeline uses a Data Collector node to gather structured information (issue type, date, parties involved), validates the required fields are complete, then calls an integration to create a case in the firm’s compliance management system. The same structured intake process runs for every submission.

- Market data briefing bot — Each morning, traders open the chatbot and ask for a briefing on key instruments. The pipeline calls a market data API node to fetch live prices and news, passes the results to an LLM with a briefing template, and returns a formatted daily summary. Because the pipeline is fixed, every briefing follows the same format and data sources.

Next steps

Chat Assistant vs Website Chatbot

Choose how your chatbot appears to users

Agentic chatbot

Build an autonomous chatbot that picks its own tools