How to use an LLM

To use an LLM, you must provide the following inputs:- System Prompt: instruct how the LLM should behave in the system prompt within the LLM node or write it in a text box and connect it to the “System” input edge. Reference data sources you use in the Prompt within System (Answer the User Question using Context)

- Prompt: define variables using double curly braces (open the variable builder by typing :

{{in any text field)

LLM Settings

System and Prompt

Some models (e.g., OpenAI) are trained to take two inputs, a “system” prompt that contains instruction for the model to follow and a “prompt” input with various data sources (e.g., the user message, context, data sources, etc.). Other models (e.g., Gemini) have one singular prompt where you place both the instructions and the data sources.Token Limits

Each model has a limit max number of input and output tokens. To adjust the limit for a particular model you can alter the max tokens parameter.You cannot increase the max tokens beyond the maximum supported for a particular model.

This setting is found in the gear on the LLM node.

Streaming

To stream output, click on the gear and then check “Stream Response”. This setting is found in the gear on the LLM node.Citations

You can display the sources the LLM uses by checking off “Show Sources” in the gear. This setting is found in the gear on the LLM node.JSON Response

To have the model return a structured JSON output rather than pure text check the “Json output” box. This setting is found in the gear on the LLM node. When using Json mode you can optionally provide the Json Schema. This will help the LLM know which json fields to generate. For example if I want the output json to have a “temperature” field which is an integer and a unit field which is either Celsius or Fahrenheit I can define the schema as follows:Temperature

Temperature controls the diversity of LLM generation. You can adjust the temperature settings for your models. To have more diverse or creative generations increase the temperature. To have more deterministic response decrease the temperature. This setting is found in the gear on the LLM node.Top P

The TopP parameter constrains how many tokens the LLM considers for generation at each step. For more diverse responses increase top p towards a maximum value of 1.0. This setting is found in the gear on the LLM node.AI Model Costs

Model usage is billed based on the number of tokens that you use, both in the model input and the tokens generated in the model output. One token is equal to 4 characters.| Provider | Model | Input cost per 1000 tokens | Output cost per 1000 tokens |

|---|---|---|---|

| OpenAI | gpt-4.5-preview | 0.075 | 0.15 |

| OpenAI | gpt-4 | 0.03 | 0.06 |

| OpenAI | gpt-4o | 0.0025 | 0.01 |

| OpenAI | gpt-4o-audio-preview | 0.0025 | 0.01 |

| OpenAI | gpt-4o-audio-preview-2024-10-01 | 0.0025 | 0.01 |

| OpenAI | gpt-4o-mini | 0.00015 | 0.0006 |

| OpenAI | gpt-4o-mini-2024-07-18 | 0.00015 | 0.0006 |

| OpenAI | o1-mini | 0.003 | 0.012 |

| OpenAI | o1-mini-2024-09-12 | 0.003 | 0.012 |

| OpenAI | o1-preview | 0.015 | 0.06 |

| OpenAI | o1-preview-2024-09-12 | 0.015 | 0.06 |

| OpenAI | o1 | 0.015 | 0.06 |

| OpenAI | o1-2024-12-17 | 0.015 | 0.06 |

| OpenAI | o3-mini | 0.0011 | 0.0044 |

| OpenAI | chatgpt-4o-latest | 0.005 | 0.015 |

| OpenAI | gpt-4o-2024-05-13 | 0.005 | 0.015 |

| OpenAI | gpt-4o-2024-08-06 | 0.0025 | 0.01 |

| OpenAI | gpt-4o-2024-11-20 | 0.0025 | 0.01 |

| OpenAI | gpt-4-turbo-preview | 0.01 | 0.03 |

| OpenAI | gpt-4-0314 | 0.03 | 0.06 |

| OpenAI | gpt-4-0613 | 0.03 | 0.06 |

| OpenAI | gpt-4-32k | 0.06 | 0.12 |

| OpenAI | gpt-4-32k-0314 | 0.06 | 0.12 |

| OpenAI | gpt-4-32k-0613 | 0.06 | 0.12 |

| OpenAI | gpt-4-turbo | 0.01 | 0.03 |

| OpenAI | gpt-4-turbo-2024-04-09 | 0.01 | 0.03 |

| OpenAI | gpt-4-1106-preview | 0.01 | 0.03 |

| OpenAI | gpt-4-0125-preview | 0.01 | 0.03 |

| OpenAI | gpt-4-1106-vision-preview | 0.01 | 0.03 |

| OpenAI | gpt-3.5-turbo | 0.0015 | 0.002 |

| Anthropic | claude-2 | 0.008 | 0.024 |

| Anthropic | claude-2.1 | 0.008 | 0.024 |

| Anthropic | claude-3-haiku-20240307 | 0.00025 | 0.00125 |

| Anthropic | claude-3-5-haiku-20241022 | 0.001 | 0.005 |

| Anthropic | claude-3-opus-20240229 | 0.015 | 0.075 |

| Anthropic | claude-3-sonnet-20240229 | 0.003 | 0.015 |

| Anthropic | claude-3-5-sonnet-20241022 | 0.003 | 0.015 |

| Anthropic | claude-3-7-sonnet-20250219 | 0.003 | 0.015 |

| Cohere | command-r | 0.00015 | 0.0006 |

| Cohere | command-r-08-2024 | 0.00015 | 0.0006 |

| Cohere | command-light | 0.0003 | 0.0006 |

| Cohere | command-r-plus | 0.0025 | 0.01 |

| Cohere | command-r-plus-08-2024 | 0.0025 | 0.01 |

| Cohere | command-nightly | 0.001 | 0.002 |

| Cohere | command | 0.001 | 0.002 |

| Perplexity | llama-3.1-sonar-huge-128k-online | 0.005 | 0.005 |

| Perplexity | llama-3.1-sonar-large-128k-online | 0.001 | 0.001 |

| Perplexity | llama-3.1-sonar-small-128k-online | 0.0002 | 0.0002 |

| text-bison | 0.00025 | nan | |

| text-bison@001 | 0.00025 | nan | |

| text-bison@002 | 0.00025 | nan | |

| text-bison32k | 0.000125 | 0.000125 | |

| text-bison32k@002 | 0.000125 | 0.000125 | |

| text-unicorn | 0.01 | 0.028 | |

| text-unicorn@001 | 0.01 | 0.028 | |

| chat-bison | 0.000125 | 0.000125 | |

| chat-bison@001 | 0.000125 | 0.000125 | |

| chat-bison@002 | 0.000125 | 0.000125 | |

| chat-bison-32k | 0.000125 | 0.000125 | |

| chat-bison-32k@002 | 0.000125 | 0.000125 | |

| code-bison | 0.000125 | 0.000125 | |

| code-bison@001 | 0.000125 | 0.000125 | |

| code-bison@002 | 0.000125 | 0.000125 | |

| code-bison32k | 0.000125 | 0.000125 | |

| code-bison-32k@002 | 0.000125 | 0.000125 | |

| code-gecko@001 | 0.000125 | 0.000125 | |

| code-gecko@002 | 0.000125 | 0.000125 | |

| code-gecko | 0.000125 | 0.000125 | |

| code-gecko-latest | 0.000125 | 0.000125 | |

| codechat-bison@latest | 0.000125 | 0.000125 | |

| codechat-bison | 0.000125 | 0.000125 | |

| codechat-bison@001 | 0.000125 | 0.000125 | |

| codechat-bison@002 | 0.000125 | 0.000125 | |

| codechat-bison-32k | 0.000125 | 0.000125 | |

| codechat-bison-32k@002 | 0.000125 | 0.000125 | |

| gemini-pro | 0.0005 | 0.0015 | |

| gemini-1.0-pro | 0.0005 | 0.0015 | |

| gemini-1.0-pro-001 | 0.0005 | 0.0015 | |

| gemini-1.5-pro | 0.00125 | 0.005 | |

| gemini-1.5-pro-002 | 0.00125 | 0.005 | |

| gemini-1.5-pro-001 | 0.00125 | 0.005 | |

| gemini-1.5-flash | 7.5e-05 | 0.0003 | |

| gemini-1.5-flash-exp-0827 | 4.688e-06 | 4.6875e-06 | |

| gemini-1.5-flash-002 | 7.5e-05 | 0.0003 | |

| gemini-1.5-flash-001 | 7.5e-05 | 0.0003 | |

| gemini-1.5-flash-preview-0514 | 7.5e-05 | 4.6875e-06 | |

| gemini-2.0-flash-exp | 0 | 0 | |

| gemini-2.0-flash-thinking-exp-01-21 | 0 | 0 | |

| gemini-2.0-flash-thinking-exp | 0 | 0 | |

| gemini-2.0-flash-lite-preview-02-05 | 7.5e-05 | 0.0003 | |

| gemini-2.0-flash-001 | 0.00015 | 0.0006 | |

| Amazon Bedrock | amazon.titan-text-lite-v1 | 0.0003 | 0.0004 |

| Amazon Bedrock | amazon.titan-text-express-v1 | 0.0013 | 0.0017 |

| Amazon Bedrock | amazon.nova-micro-v1:0 | 3.5e-05 | 0.00014 |

| Amazon Bedrock | amazon.nova-lite-v1:0 | 6e-05 | 0.00024 |

| Amazon Bedrock | amazon.nova-pro-v1:0 | 0.0008 | 0.0032 |

| Amazon Bedrock | meta.llama3-8b-instruct-v1:0 | 0.0003 | 0.0006 |

| Amazon Bedrock | us.meta.llama3-1-8b-instruct-v1:0 | 0.0003 | 0.0006 |

| Amazon Bedrock | us.meta.llama3-1-70b-instruct-v1:0 | 0.0015 | 0.002 |

| Amazon Bedrock | us.meta.llama3-2-1b-instruct-v1:0 | 0.0004 | 0.0008 |

| Amazon Bedrock | us.meta.llama3-2-3b-instruct-v1:0 | 0.0005 | 0.001 |

| Amazon Bedrock | us.meta.llama3-2-11b-instruct-v1:0 | 0.001 | 0.0015 |

| Amazon Bedrock | us.meta.llama3-2-90b-instruct-v1:0 | 0.002 | 0.0025 |

| Azure OpenAI | gpt-3.5-turbo | 0.0015 | 0.002 |

| XAI | grok-2 | 0.002 | 0.01 |

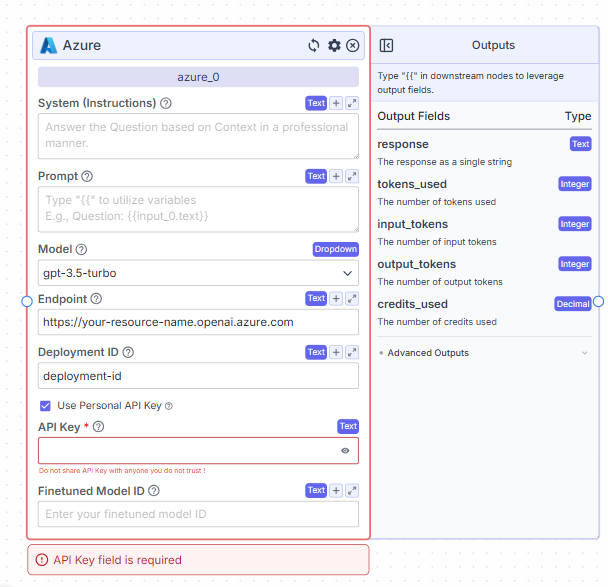

Azure LLM

gpt-3.5-turbo, or any other model from Azure OpenAI.

To connect with your own model:

To get started, you’ll need to have access to an Azure OpenAI resource and a deployed model (for example, gpt-3.5-turbo).The Endpoint field is where you enter the base URL of your Azure OpenAI resource. It usually looks like

https://your-resource-name.openai.azure.com. Make sure you do not include a trailing slash.

In the Deployment ID field, enter the name of the deployment you’ve configured in your Azure OpenAI service. This must exactly match the ID used in Azure.

If you’re using a personal API key, check the Use Personal API Key option. Then, paste your Azure API key into the API Key field. This key is available in the Azure portal under your resource’s Keys and Endpoint section. Note that this field is required when using a personal key, and you should not share this key with anyone you do not trust.

The Finetuned Model ID field is optional. You only need to fill it out if you’re working with a fine-tuned version of the model.

A common configuration might look like this: the model is set to gpt-3.5-turbo, the endpoint is https://my-openai-resource.openai.azure.com, and the deployment ID is gpt-35-turbo. The prompt could be something like Answer the following: {{input_0.text}}.

Some common issues to watch for include leaving the API Key field blank, using the wrong deployment ID, or including a trailing slash in the endpoint URL. Always double-check that all values exactly match your Azure setup.

For more information, refer to the official Azure OpenAI documentation at [learn.microsoft.com](https://learn.microsoft.com/en-us/azure/ai-services/openai/reference).

Custom LLMs

Want to connect to a specialized model provider or a locally hosted LLM? Use the custom LLM node. We have support for sending requests to models that are compatible with the OpenAI Chat API format. You can use models from your own accounts with LLM providers such as TogetherAI and Replicate. The custom LLM node requires the following parameters:modelapi_keybase_url

Local Models

Models hosted locally on your computer are good for prototyping, experimenting with new models and cost savings. You can access your local models be setting up a connection to a locally running LLM server. Make sure to find a secure way to forward your locally running server’s port to the internet. LM Studio Follow the instructions to start a local LM studio server. The standard API key for LM studio islm-studio

Ollama

Start a local LLM server using the Ollama CLI.

Prompt Engineering Guidelines

Be as specific as possible - if the output should be one sentence or if the output should be in the first person, include the instructions in the text block connected to the system prompt. Within the system prompt, you can also mention things like:- The tone you want the model to use (e.g., Respond in a professional manner).

- Data sources and how the model should use them (e.g., Use datasource X when the question is related to sales; use datasource Y when the question is related to customer support).

- Specific information related to your company / situation that the model can reference (e.g., calendly link)

- Specific text that you want the model to output in certain situations (e.g., if you are unable to answer the question, respond with “I am unable to answer the question”).

- What type of reasoning to use (here - it is important to think through step by step how you would actually perform the action. You then, want to encode this into the system prompt).